In an era defined by the movement of people across borders, understanding the intricate patterns of migration and immigration has never been more crucial. Behind the headlines and statistics lies a complex web of technical frameworks designed to capture the fluidity of human mobility. Yet, the story told by data is only as reliable as the accuracy with which it is gathered and interpreted. Beyond numbers, the ripple effects of these population shifts profoundly influence policy decisions, shaping economies, societies, and international relations. This article delves into the dynamic interplay between the methodologies used to analyze migration flows, the challenges of ensuring data fidelity, and the vital role these insights play in crafting informed, effective policies for our interconnected world.

Unraveling Structural Models in Migration Flow Analysis

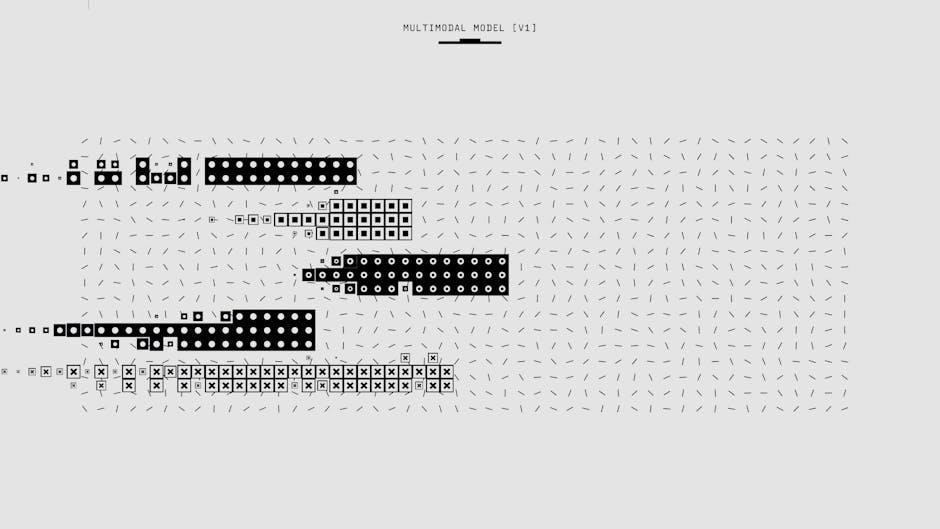

Structural models in migration flow analysis serve as powerful tools to explicitly represent the underlying decision-making processes influencing individual and aggregate migration behavior. These models often integrate economic, social, and policy-driven variables into a formal framework, such as discrete choice models or dynamic programming approaches, enabling the quantification of the causal mechanisms that propel migration. Core to their design is the consideration of constraints like labor market access, legal barriers, and information asymmetries, which influence migrants’ utility maximization problems. For instance, the classic Roy model adapts well to account for heterogeneous skill distributions across origin and destination regions, allowing researchers to simulate how wage differentials and employment probabilities interact to influence migration flows. The use of structural models facilitates counterfactual scenario analysis, critically important for policy impact assessments, by enabling the estimation of how hypothetical changes—such as tightening visa restrictions or introducing integration policies—would alter migration patterns.

Evaluating structural models requires meticulous attention to identification strategies and parameter estimation to ensure robustness and predictive validity. Key performance metrics include the model’s ability to replicate observed migration patterns and forecast responses to exogenous shocks. Structural estimation methods such as the Generalized Method of Moments (GMM) or Maximum Likelihood Estimation (MLE) help address endogeneity and sample selection biases common in migration data. A comparative advantage of structural approaches over reduced-form counterparts is their explicit incorporation of latent decision variables and policy constraints, enabling nuanced policy simulations. However, limitations arise from computational complexity and the need for rich micro-level data, often constraining model scalability across diverse migration corridors. The following table summarizes core considerations:

| Aspect | Description | Example |

|---|---|---|

| Mechanism | Utility maximization under constraints, capturing individual migration motivation | Structural discrete choice migration model incorporating visa quotas |

| Process Logic | Sequential decision stages: information gathering, destination choice, migration execution | Dynamic programming model simulating multi-period migration decisions |

| Constraints | Legal restrictions, social networks, and economic barriers limiting migration options | Incorporation of border enforcement levels in structural estimation |

| Evaluation Criteria | Goodness-of-fit, parameter identifiability, out-of-sample predictive accuracy | Comparison of model forecasts to recent census migration data |

Evaluating Data Integrity and Accuracy in Immigration Studies

- Mechanisms for Verifying Data Integrity: Ensuring data integrity in immigration studies revolves around validating the completeness, consistency, and reliability of datasets collected from multiple sources such as border control agencies, census surveys, and immigration petitions. Techniques such as cross-referencing data points via unique identifiers (e.g., passport numbers or biometric markers), implementing checksums, and using cryptographic hashing during data transmission secure records against tampering or corruption. Additionally, longitudinal consistency checks help detect anomalies—such as improbable age progressions or residence durations—that might indicate data entry errors or fraudulent submissions.

- Evaluation Criteria and Process Logic: Accuracy is assessed not just by correctness but through triangulation—comparing multiple independent sources to minimize biases and detect discrepancies. Metrics like completeness (percentage of missing values), precision (granularity of location or status details), and temporal accuracy (time lag between event and reporting) form core criteria. Establishing data lineage and audit trails using metadata helps chart the evolution of datasets through collection, cleaning, and aggregation stages, facilitating repeatability and trust. For example, aligning administrative records with survey responses can highlight under-reporting of undocumented migration flows, prompting necessary data adjustments.

| Specification | Common Constraints | Performance Variables |

|---|---|---|

| Timeliness of Data Entry | Reporting delays, batch processing schedules | Latency in data availability for analysis |

| Standardization of Formats | Diverse coding schemes, language differences | Interoperability and ease of dataset integration |

| Data Granularity | Privacy limitations, aggregation policies | Detail level for subgroup analyses |

Compared to raw administrative data, survey-based datasets often introduce sampling biases or recall inaccuracies, necessitating sophisticated weighting adjustments and error modeling. Constraints such as privacy regulations may limit access to personally identifiable information, enforcing anonymization standards that can reduce the granularity and hence the precision of mobility pattern analysis. Researchers must balance these limitations against the need for transparent and reproducible methods. Furthermore, performance variables such as update frequency and data refresh rates directly impact the responsiveness of migration monitoring systems, influencing policy decisions that require timely insights into emerging trends or crises.

Engineering Frameworks for Policy Impact Measurement

Engineering frameworks designed for policy impact measurement in migration and immigration contexts must integrate multi-source data aggregation, causal inference algorithms, and dynamic modeling to capture both immediate and latent effects. Key mechanisms include counterfactual scenario simulation—using techniques like synthetic control methods—and longitudinal tracking of migrant cohorts via linked administrative datasets. Evaluation criteria focus on validity (internal and external), temporal resolution, and sensitivity to policy variations. Performance variables such as model accuracy, bias reduction, and computational scalability are critical, especially when dealing with large-scale heterogeneous data from border control systems, social services, and labor markets. Additionally, frameworks must specify modular components to facilitate iterative updates as new data streams emerge, ensuring adaptability to policy shifts or migratory pattern changes.

Constraints typically arise from data incompleteness, privacy-preserving transformations (e.g., differential privacy or k-anonymization), and legal restrictions, which affect parameter identifiability and estimator consistency. To mitigate these, frameworks employ constrained optimization and robust statistical estimators that tolerate noisy or missing data without sacrificing interpretability. Comparative approaches such as agent-based modeling versus system dynamics highlight trade-offs between granularity and computational demands. For example, agent-based models excel in capturing individual migrant decision-making but often require extensive calibration, whereas system dynamics focus on aggregate flows with faster computation. The following table outlines essential specifications and their implications:

| Specification | Implications | Example Frameworks |

|---|---|---|

| Causal Inference Integration | Enables policy effect attribution, reduces confounding bias | Difference-in-Differences, Instrumental Variables |

| Data Linkage & Harmonization | Improves cohort tracking, consistency across sources | Record Linkage Algorithms, ETL Pipelines |

| Privacy-Preserving Techniques | Maintains confidentiality, limits data utility trade-offs | Differential Privacy, Secure Multi-party Computation |

| Scalability & Performance | Enables real-time or near-real-time analysis of migration flows | Distributed Computing, Cloud-Based Analytics |

- Process Logic: Modular pipelines start with data ingestion and preprocessing, proceed through causal effect estimation and validation, and conclude with scenario simulations and policy recommendation outputs.

- Evaluation: Frameworks are benchmarked via cross-validation against known policy changes, with stress tests under extreme migratory events to ensure robustness.

Performance Metrics and Constraints in Migration Dynamics

- Performance metrics in migration dynamics primarily focus on quantifiable indicators such as net migration rates, integration indices, labor market absorption efficiency, and remittance flow stability. These metrics assess both the volume and quality of migration outcomes. For instance, the integration index may incorporate variables like language proficiency, employment rates among immigrants, and social inclusion measures, combined into a composite score to gauge policy effectiveness. Evaluating the temporal progression of these metrics allows analysts to identify lag effects or systemic bottlenecks, such as persistent underemployment despite high educational attainment among migrants.

- Migration processes must be analyzed within specific operational constraints, including geopolitical boundaries, legal frameworks, and logistical capacities. For example, visa quota limitations create upper bounds that not only limit migrant inflows but also influence irregular migration patterns, necessitating nuanced interpretations of migration data. Performance variables such as processing time for asylum applications or border inspection rates act as throughput parameters, whose optimization impacts the accuracy and timeliness of migration statistics. These constraints interact dynamically; thus, models often employ feedback loops and sensitivity analysis to reconcile policy ambition with practical enforceability, ensuring metrics embody realistic migratory flows rather than idealized or projected values.

| Performance Variable | Description | Typical Constraint | Impact on Metrics |

|---|---|---|---|

| Processing Time | Duration from application submission to decision | Administrative capacity, documentation completeness | Delays cause lag in migration stock data and policy responsiveness |

| Border Inspection Rate | Proportion of migrants physically screened | Resource allocation, border infrastructure | Lower rates increase uncertainty in arrival counts and irregular migration estimates |

| Quota Utilization | Percentage of permitted migration slots used | Policy limits, international agreements | Restrictive quotas can skew metrics by encouraging off-record migration channels |

Comparative Assessment of Analytical Techniques in Migration Research

- Quantitative Statistical Models: Predominantly employing regression analysis, Markov chains, and agent-based simulations, quantitative models prioritize statistical robustness and predictive accuracy. These models leverage large datasets, such as census figures, border crossing records, and labor market statistics, emphasizing variable interdependencies like economic indicators, policy changes, and demographic shifts. The primary evaluation criteria here include model fit (e.g., R², Akaike Information Criterion), sensitivity (response to parameter variations), and out-of-sample predictive performance. For instance, linear regression models estimating remigration rates often contend with multicollinearity and missing data biases, which necessitate rigorous preprocessing like imputation and variable selection algorithms. Mechanistically, agent-based models simulate individual migration decisions based on predefined behavioral rules, allowing nuanced scenario testing but demanding substantial computational resources and calibration efforts.

- Qualitative and Mixed-Methods Approaches: Anchored in ethnographic fieldwork, in-depth interviews, and policy discourse analysis, qualitative methodologies focus on contextualizing migration phenomena beyond numeric trends. Their process logic prioritizes thematic coding, narrative synthesis, and triangulation of sources to capture socio-political dynamics influencing migration flows, such as visa regulations or integration policies. While lacking the scalability of quantitative tools, mixed-methods combine surveys with follow-up interviews to validate and enrich statistical findings, addressing constraints in data granularity and participant heterogeneity. Performance variables for qualitative assessments emphasize depth, transferability, and reflexivity, with techniques like intercoder reliability checks enhancing credibility. The table below contrasts core performance dimensions across methodologies:

Criterion Quantitative Models Qualitative/Mixed-Methods Data Volume High (thousands to millions of records) Low to moderate (dozens to hundreds of cases) Analytical Flexibility Moderate (statistical constraints) High (adaptive thematic exploration) Reproducibility High (based on documented algorithms) Moderate (dependent on interpretive frameworks) Contextual Depth Limited (population-level insights) Extensive (individual and policy-level insights) Computational Demand Variable (simple to complex simulations) Low (primarily human-coded analysis)

Final Thoughts

In tracing the intricate pathways of migration and immigration, this exploration has illuminated the vital role of robust technical frameworks, the challenges and rewards of ensuring data accuracy, and the nuanced ways policies ripple through human mobility patterns. As borders evolve not just on maps but in the lived experiences of millions, the quest for clearer, more precise analysis becomes ever more critical. Ultimately, understanding these dynamics is not just a technical exercise—it is a key to shaping thoughtful policies that honor both the complexity of movement and the dignity of those who journey. As researchers and policymakers continue to refine their tools and approaches, the hope remains that this shared knowledge will guide more informed, compassionate responses to one of the defining phenomena of our time.